TacOS

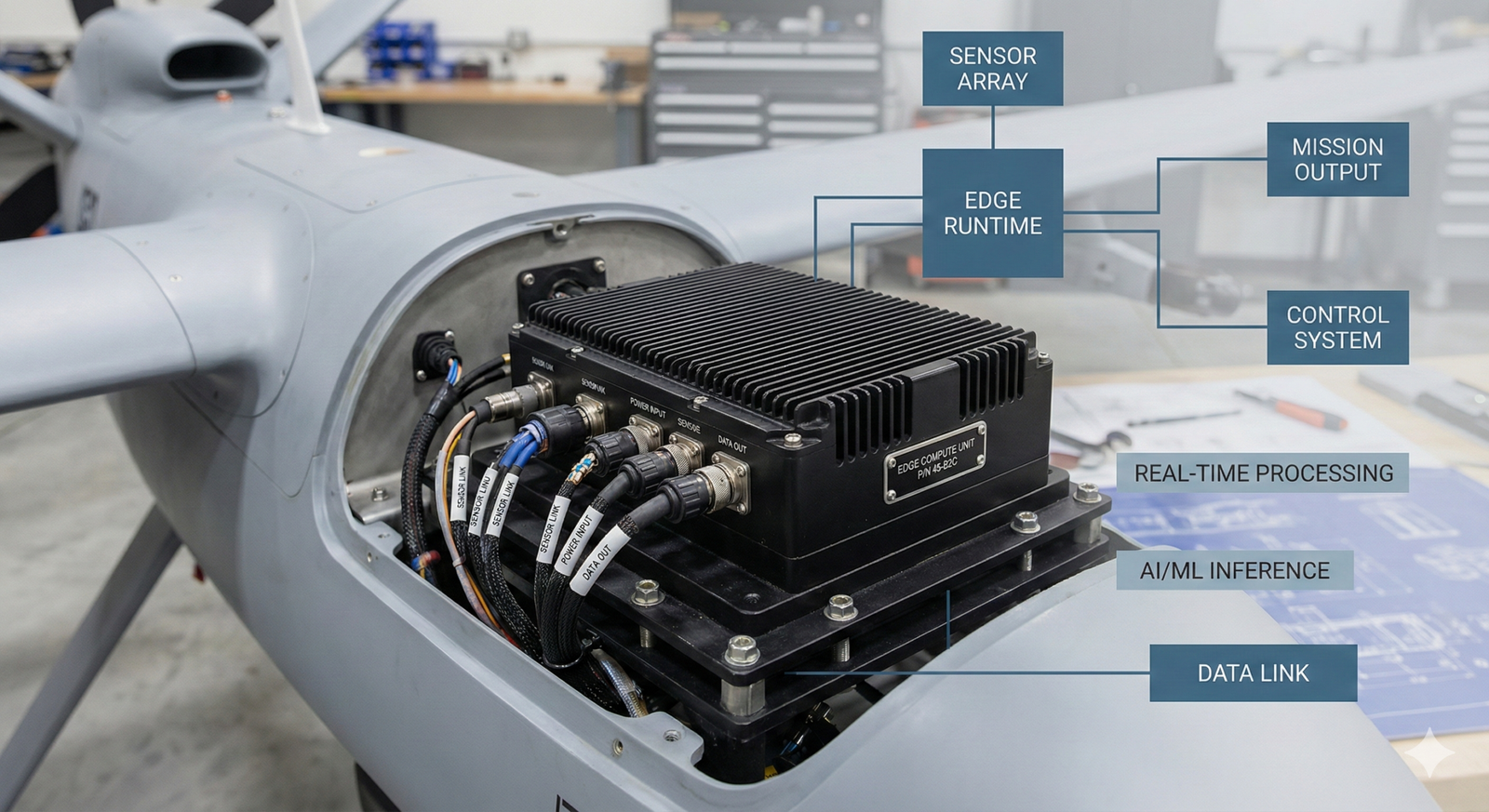

Edge AI Runtime for Autonomous Operations and Sensor Fusion

Execute AI analytics, sensor fusion, and

mission logic on small, power-limited hardware.

Offline-capable. Operator-controlled.

Why TacOS?

Most edge AI assumes constant connectivity and developer access. TacOS executes on constrained devices, operates without cloud or GPS, and lets operators adjust workflows without code.

Built for Low SWap Constraints

Runs on sub-10W edge platforms.

True offline operation

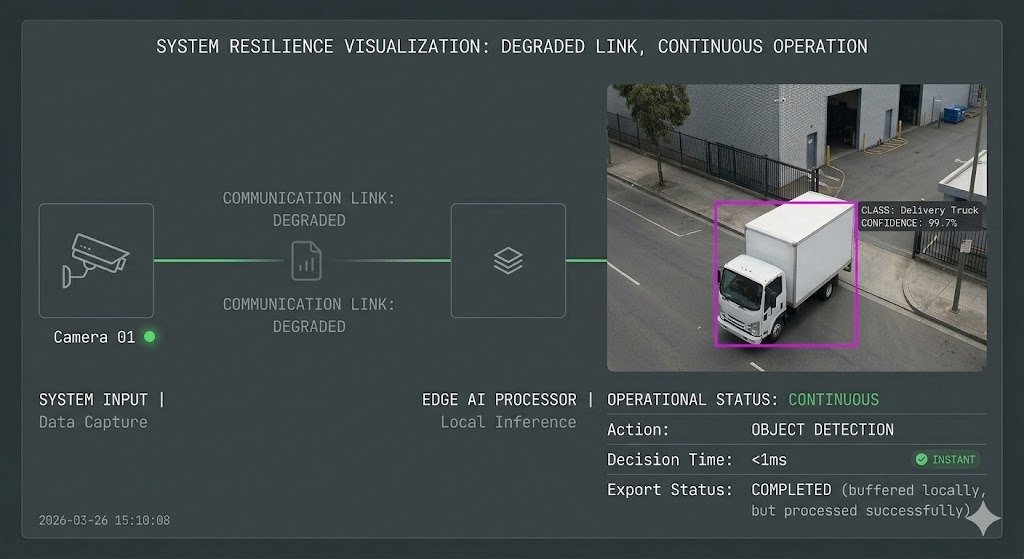

Detect, decide, and act in DDIL environments.

Operator-configurable

Drag-and-drop pipelines. No developers required.

Sensor-to-decision on device

Raw feeds to real-time actions at the edge.

Multi-sensor fusion

Correlate radar, EO/IR, AIS, ADS-B, RF, acoustic, and space feeds.

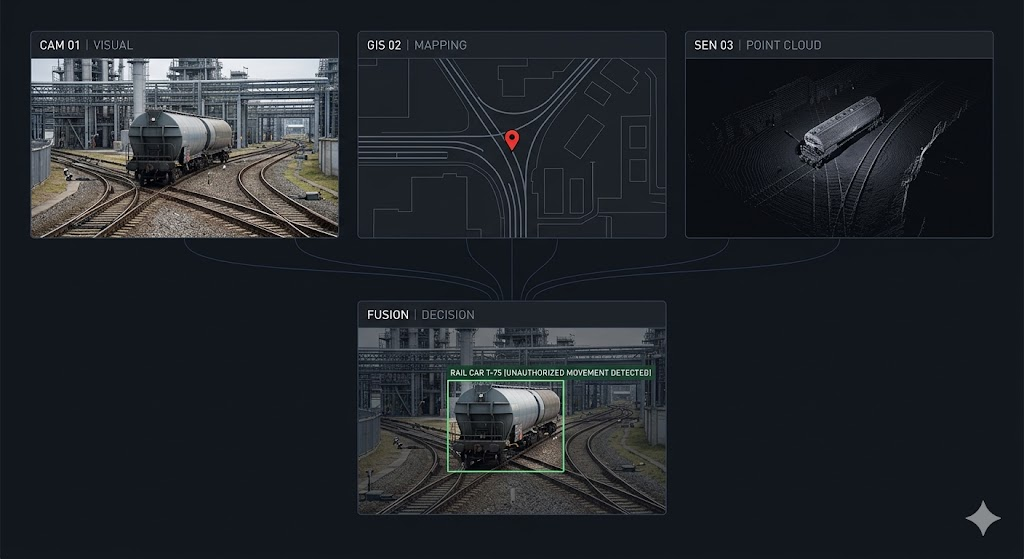

Reason-coded outputs

Provenance, confidence scores, and audit trails on every action.

Capabilities

Detect, track, decide

Millisecond-level response. No backhaul required.

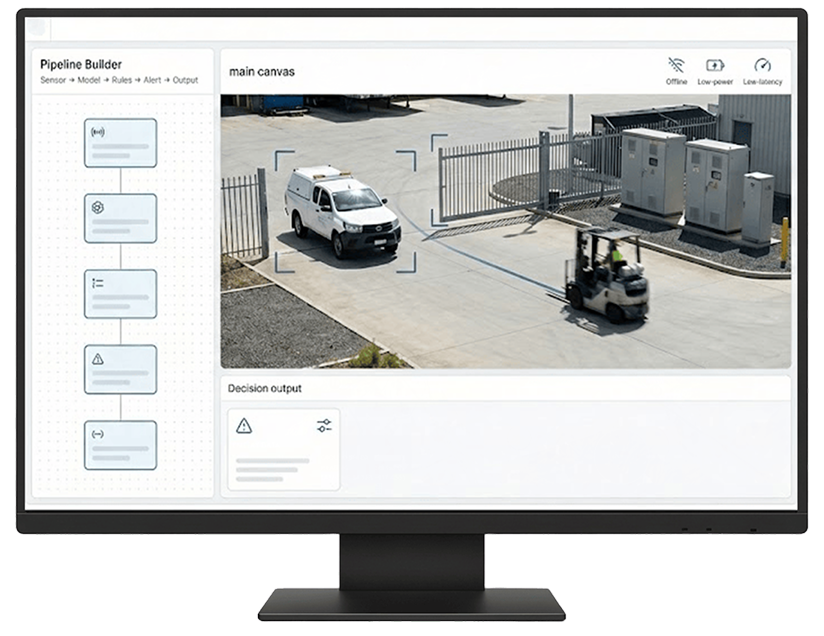

Fuse multiple sensors

Time-aligned, geo-referenced tracks with confidence scoring.

Adapt in the field

Swap models and thresholds without rebuilds.

Coordinate across platforms

Multi-vehicle and swarm support across air, ground, and maritime.

Integrate existing tools

Multi-vehicle and swarm support across air, ground, and maritime.

Run standard models

YOLO, MMDet, ONNX, TensorRT. No lock-in.

Generate evidence

Audit-ready bundles for replay and after-action review.

The Stack

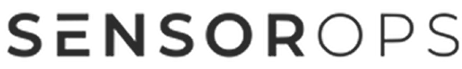

Pipeline Builder

Drag-and-drop sensors, models, rules, outputs. No code.

Model Runtime

ARM/x86, Android/Linux, GPU acceleration.

Sensor Adapters

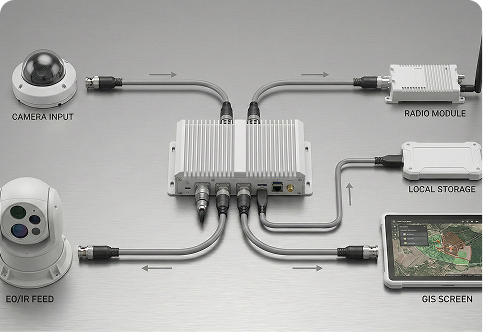

Radar, EO/IR, AIS, ADS-B, RF, acoustic ingest.

Fusion Engine

Multi-sensor correlation, time alignment, geospatial normalization.

Mission Logic

Rules, geofences, triggers, checklists at the edge.

Edge I/O

Camera ingest, radio links, storage, TAK/GIS integration.

Health & Logs

Diagnostics, audit logging, exportable summaries.

Why Teams Trust It?

Field-proven

Built for rough comms, variable power, harsh conditions.

Secure by design

RBAC, mTLS, signed artifacts, audit logging, SBOM. PKI-compatible.

Classified environments

Unclassified through Secret.

Store-and-forward

Maintains operations under constrained links.

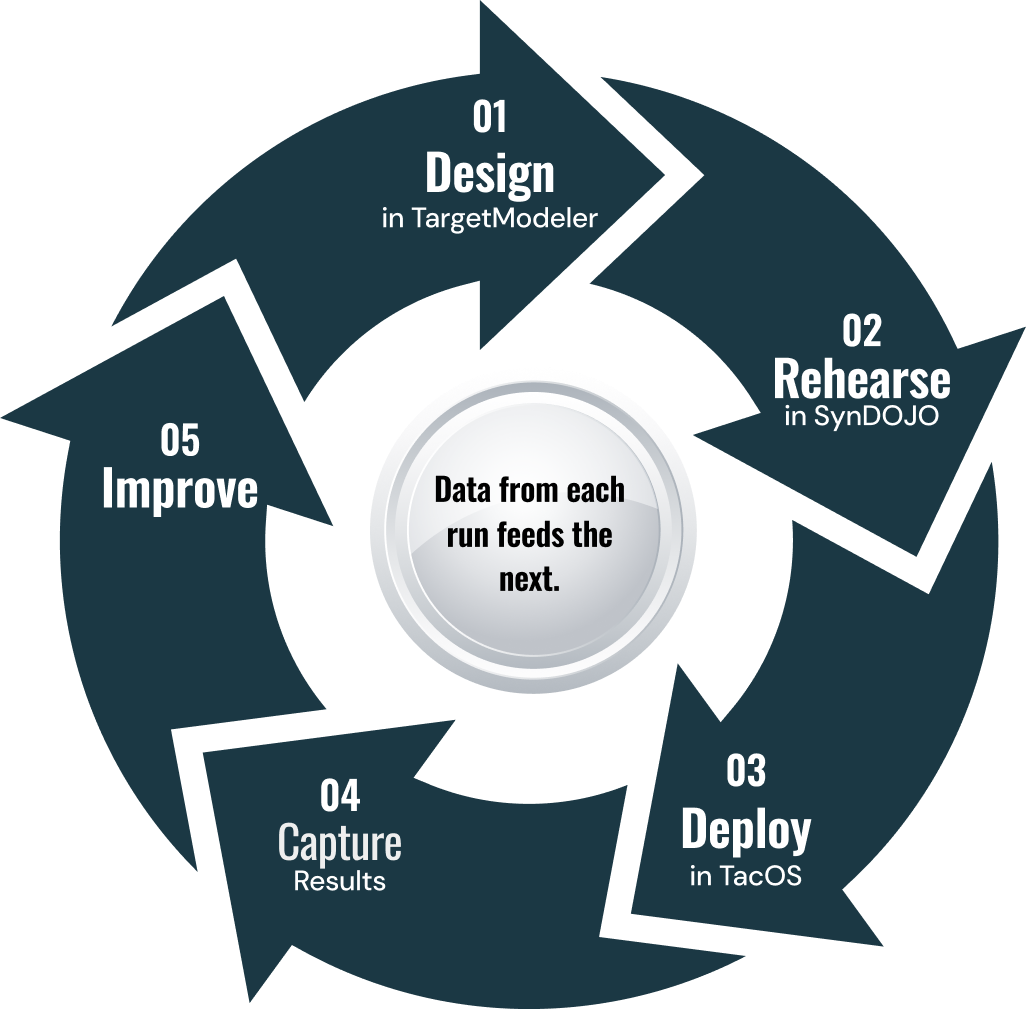

Fits the SensorOps loop

Pairs with TargetModeler and SynDOJO for full lifecycle.

Operational impact

Edge-speed decisions

Detection-to-action in milliseconds, no backhaul.

Bandwidth efficiency

Transmit tracks and alerts instead of raw video.

DDIL resilience

Continue operations when GPS denied or communications degrade.

Hardware efficiency

High availability on SWaP-constrained platforms.

Audit-ready

Every action logged with reason codes.

Use Cases

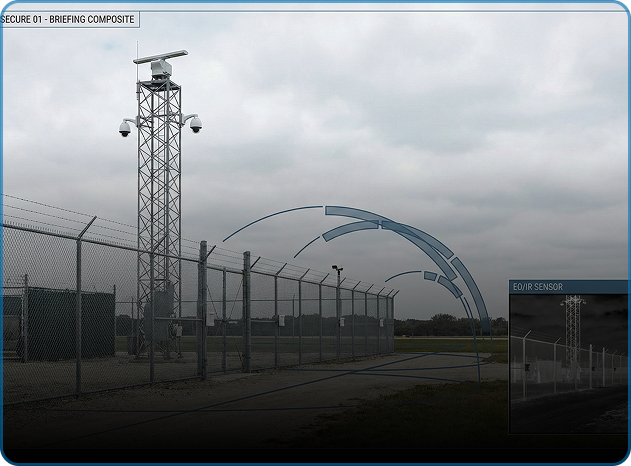

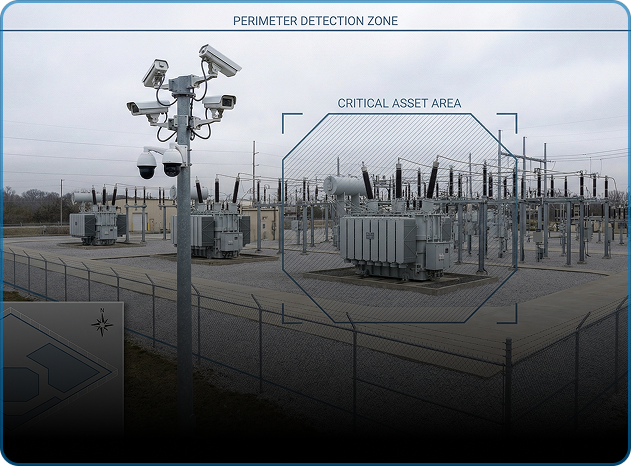

Defense

c-sUAS, UAS ISR, perimeter security, route clearance, EW-degraded operations.

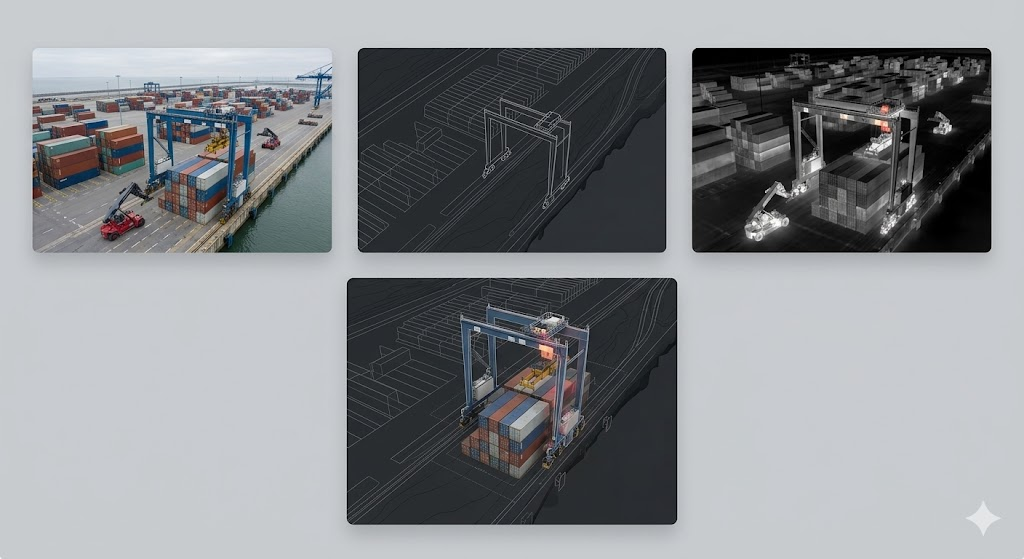

Public Safety & Infrastructure

Mobile command, event security, pipeline patrol, port security.

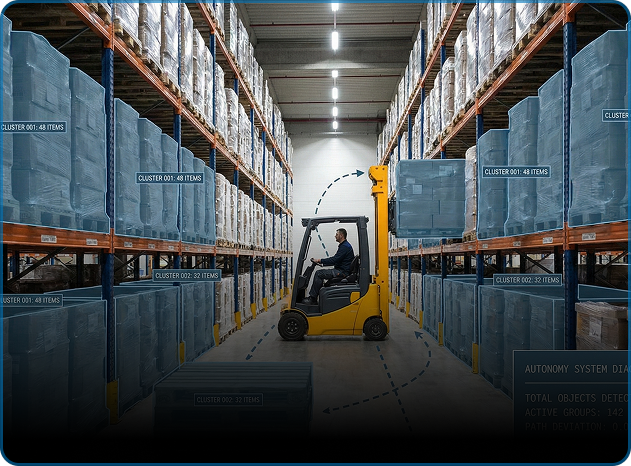

Industrial & Logistics

Yard automation, warehouse counting,

inspection QA.

OEMs & system integrators

Drop-in edge runtime that supports customer deployments without designing a new inference stack.

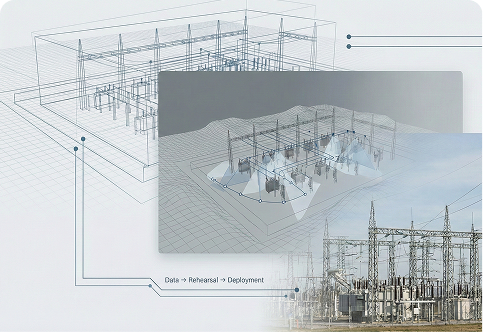

Workflow

Each rep feeds the next. Performance climbs while costs stay flat.

Pricing & deployment

Sub-10W edge platforms to server-class. Linux and Android. ARM and x86. On-prem with optional cloud-assist.

Program Impact at a Glance

Power envelope

Sub-10 W edge targets supported in typical TacOS configurations

Latency

Model-to-action response under 50 ms in representative setups

Deployment timeline

Initial deployment on supported devices commonly completed within a day

Common questions

Yes. Fully on-device processing, decisions, and actions.

Linux, Android. ARM, x86. GPU acceleration supported.

Yes. Drag-and-drop, no code.

YOLO, MMDet, ONNX, TensorRT. No lock-in.

Native bidirectional CoT messaging.

RBAC, mTLS, audit logging, SBOM. Secret-certified.

Upload models via secure transfer. No rebuild needed.