TargetModeler

Synthetic Data Generation for

Electro-Optical/Infrared (EO/IR) Vision Models

Generate balanced, labeled EO/IR datasets in minutes. No cloud. No manual tagging.

Why TargetModeler?

Real-world imagery is slow, expensive, and misses edge cases. TargetModeler delivers controlled, repeatable synthetic datasets so models improve without waiting on field collection.

Thousands of images in minutes

Mission-realistic EO/IR sets in under 10 minutes.

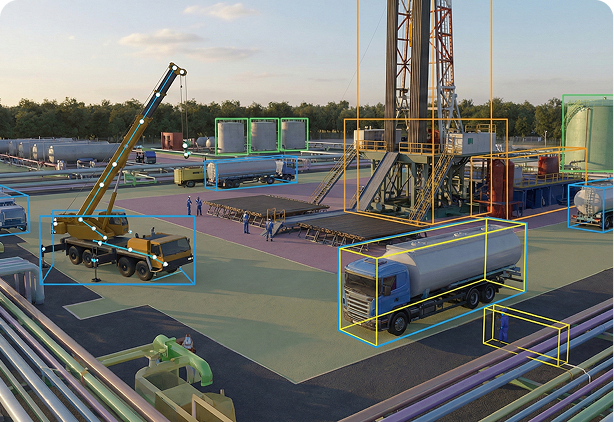

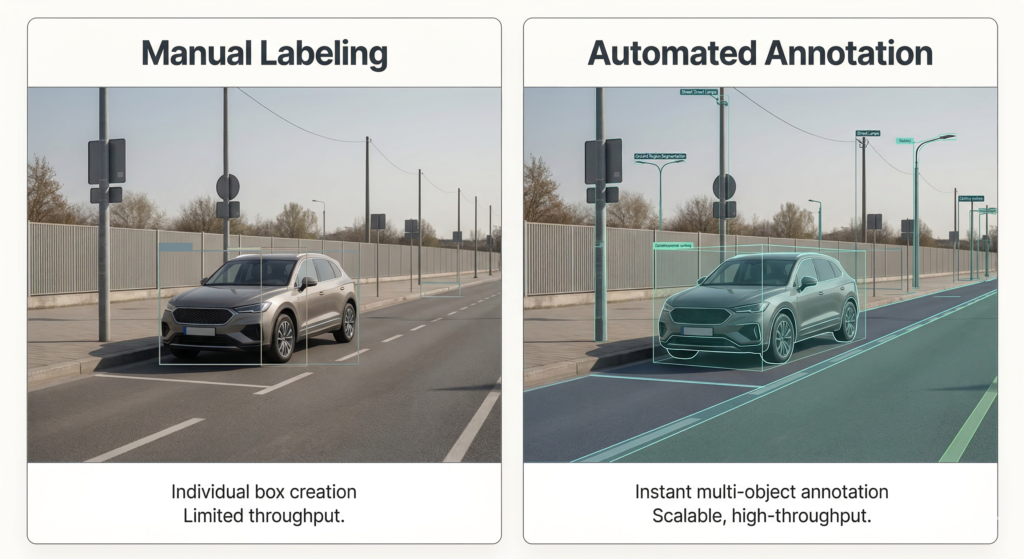

Auto-labeled at scale

Boxes, polygons, masks, key points, 3D cuboids.

Balanced by design

Control class ratios, poses, backgrounds, and sensor effects.

No code required

Operators and engineers build and export directly.

Physics-based rendering

Controllable, repeatable, defensible results.

Capabilities

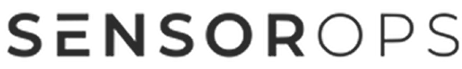

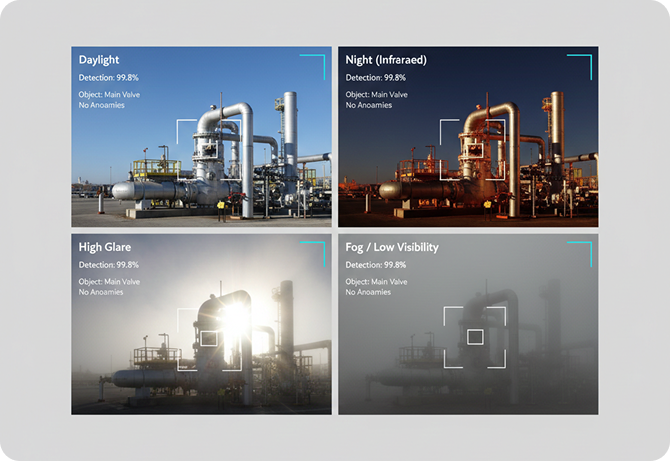

Edge cases on demand

Occlusion, low light, glare, clutter, small objects, look-alikes.

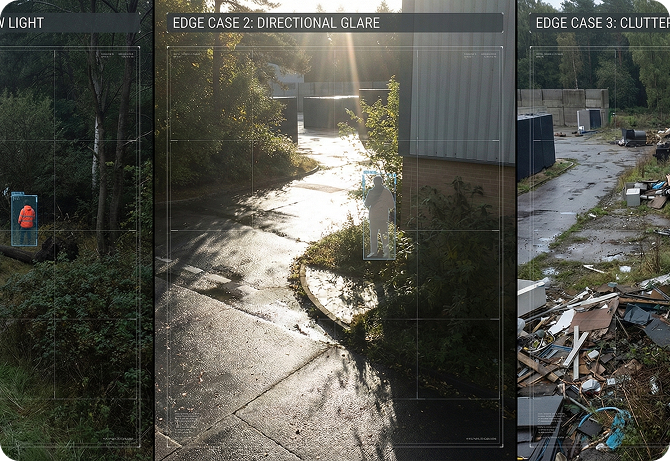

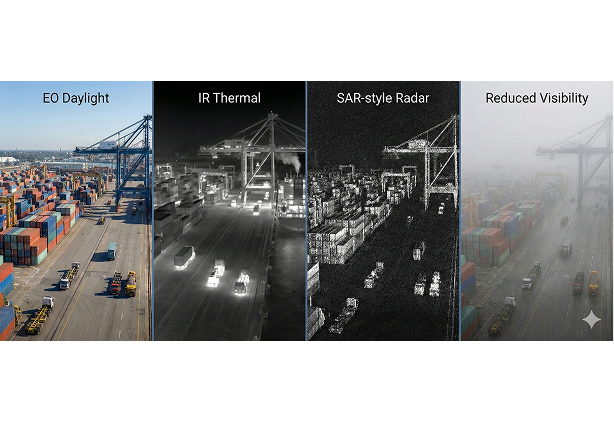

Match operational sensors

EO/IR, SAR, noise, blur, compression, optics limits.

Auto-label with QA

Rich annotations and quality reports with every dataset.

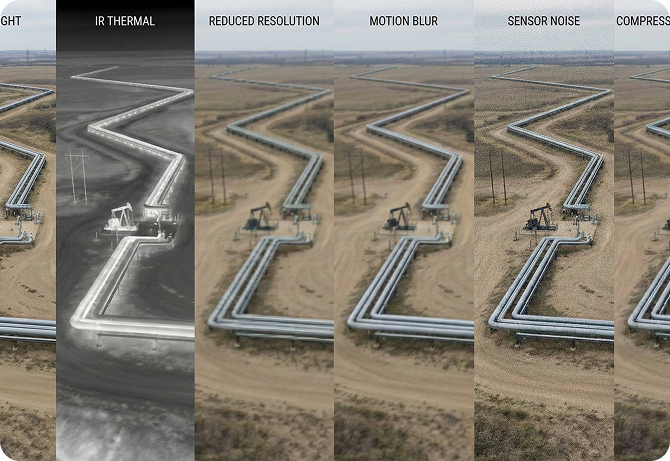

Rebalance without rebuilding

Adjust ratios and hard negatives instantly.

Export anywhere

COCO, YOLO, MMDet, or custom schemas.

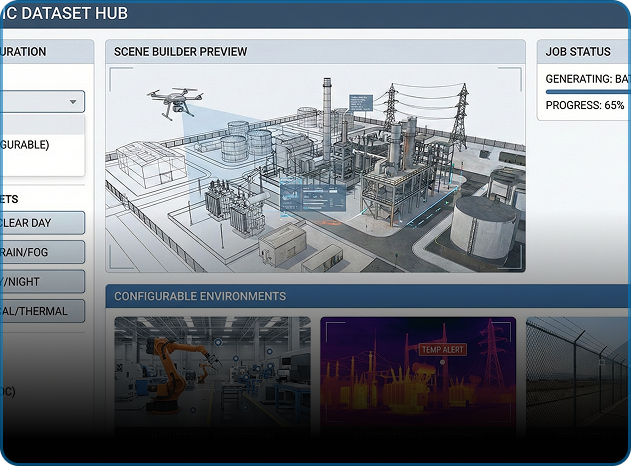

The stack

Scene Builder

Drag-and-drop assets, terrains, routes, behaviors, injects.

Sensor Forge

Physics-based EO/IR/SAR with savable presets.

Label Engine

Auto-annotation with QA reports.

Label Engine

Auto-annotation with QA reports.

Label Engine

Auto-annotation with QA reports.

Why teams trust It?

Documented gains

Reduced false positives and stronger detection before live evaluation.

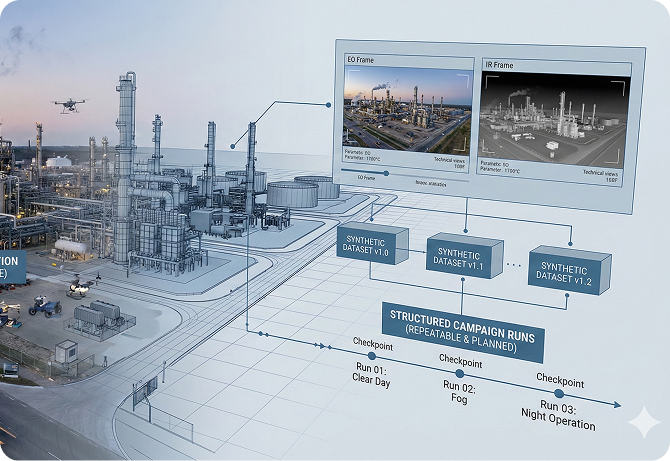

Repeatable by design

Same inputs, same outputs, measurable improvement.

Secure Deployment

On-prem, air-gapped, no internet required.

Operational impact

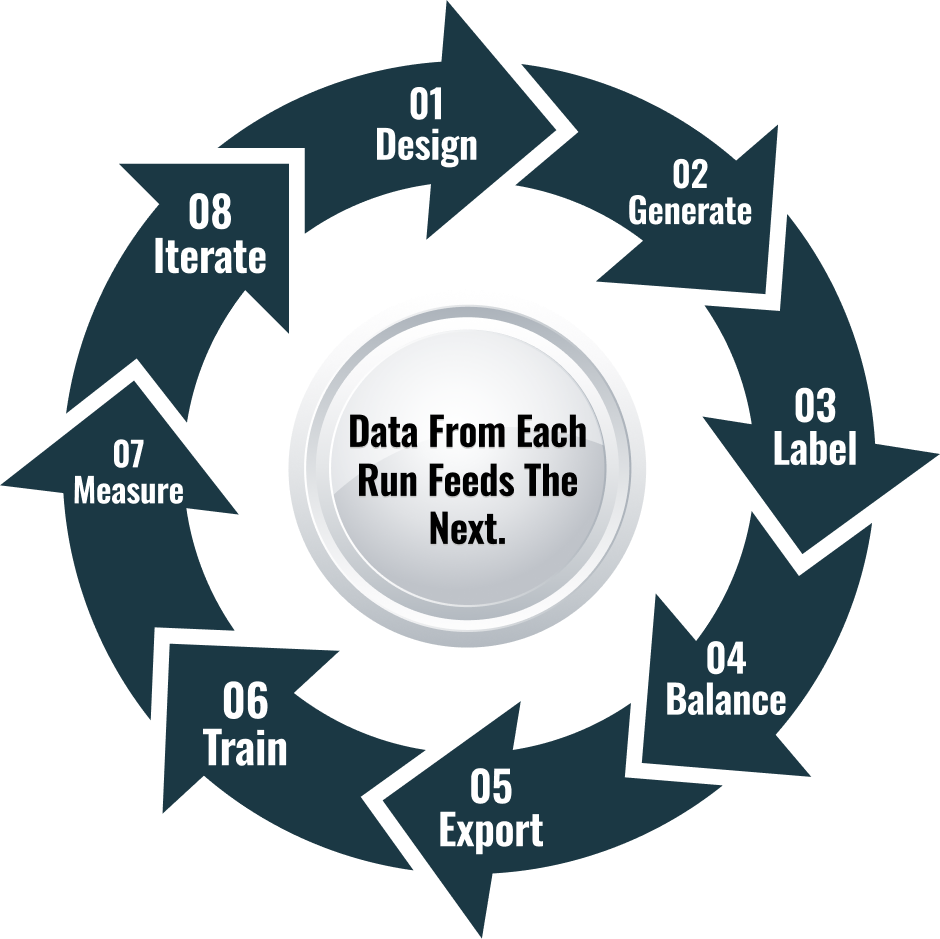

Accelerated iteration

Drive model updates between live collections.

Hard-condition performance

Improve results in night, glare, clutter, and small-target scenarios.

Automated labeling

Shift annotation from manual tools to automated workflows.

Repeatable campaigns

Replace ad hoc field collection with planned synthetic runs.

use cases

Defense

ATR/ISR targets, look-alike discrimination, EW-degraded conditions.

Public Safety & Critical Infrastructure

Perimeter, substation, pipeline scenarios.

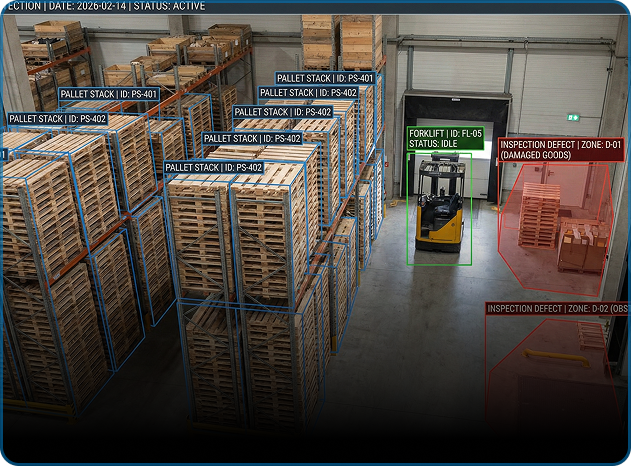

Industrial

Yard logistics, warehouse detection, defect inspection.

Platform OEMs & Integrators

Customer-specific datasets for training and acceptance.

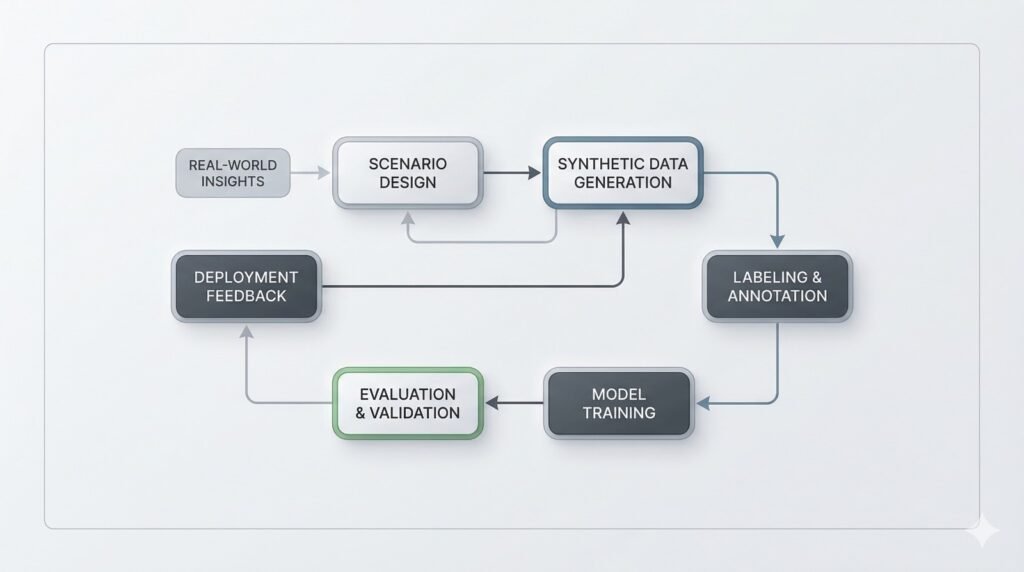

Workflow

Pricing & deployment

On-prem install. Optional cloud-assist. Start with one project, scale as needed.

Program impact at a glance

< 10 minutes

to generate an initial 1,000 labeled images in a typical configuration

Manual labeling time

reduced significantly through automatic annotation

Edge-case coverage expanded

across conditions such as night, glare, clutter, and small targets

FAQs

Yes, CAD models and terrain can be brought in and tagged once for reuse.

Yes. Full label export.

Yes. No code, presets included.

COCO, YOLO, MMDet, custom.